Stochastic gradient descent with gradient estimator for categorical features

The broad field of machine learning (ML) provides a wide array of techniques and methods that cover numerous situations. Supply chain, however, comes with its own specific set of data challenges, and sometimes aspects that might be deemed “basic” by supply chain practitioners do not benefit from satisfying ML instruments – at least according to our standards. Such was the case with categorical variables, which are omnipresent in supply chain - for example, to represent product categories, countries of origin, payment methods, etc. Thus, a few of us decided to revisit the notion of categorical variables from a differentiable programming perspective.

In supply chain, categorical variables are frequently missing, not because the data isn’t known, but because the categorization itself does not even always make sense. For example, the cut (straight / skinny / slim / etc.) is applicable to pants but not belts. Hence, the distinction between “missing data” and “non-applicable data” might appear subtle but is nevertheless important. It’s the sort of distinction that differentiates a nicely behaved model from a weirdly behaved one.

The paper below presents a contribution from Paul Peseux (Lokad), Victor Nicollet (Lokad), Maxime Berar (Litis) and Thierry Paquet (Litis).

Title: Stochastic gradient descent with gradient estimator for categorical features

Authors: Paul Peseux, Maxime Berar, Thierry Paquet, Victor Nicollet

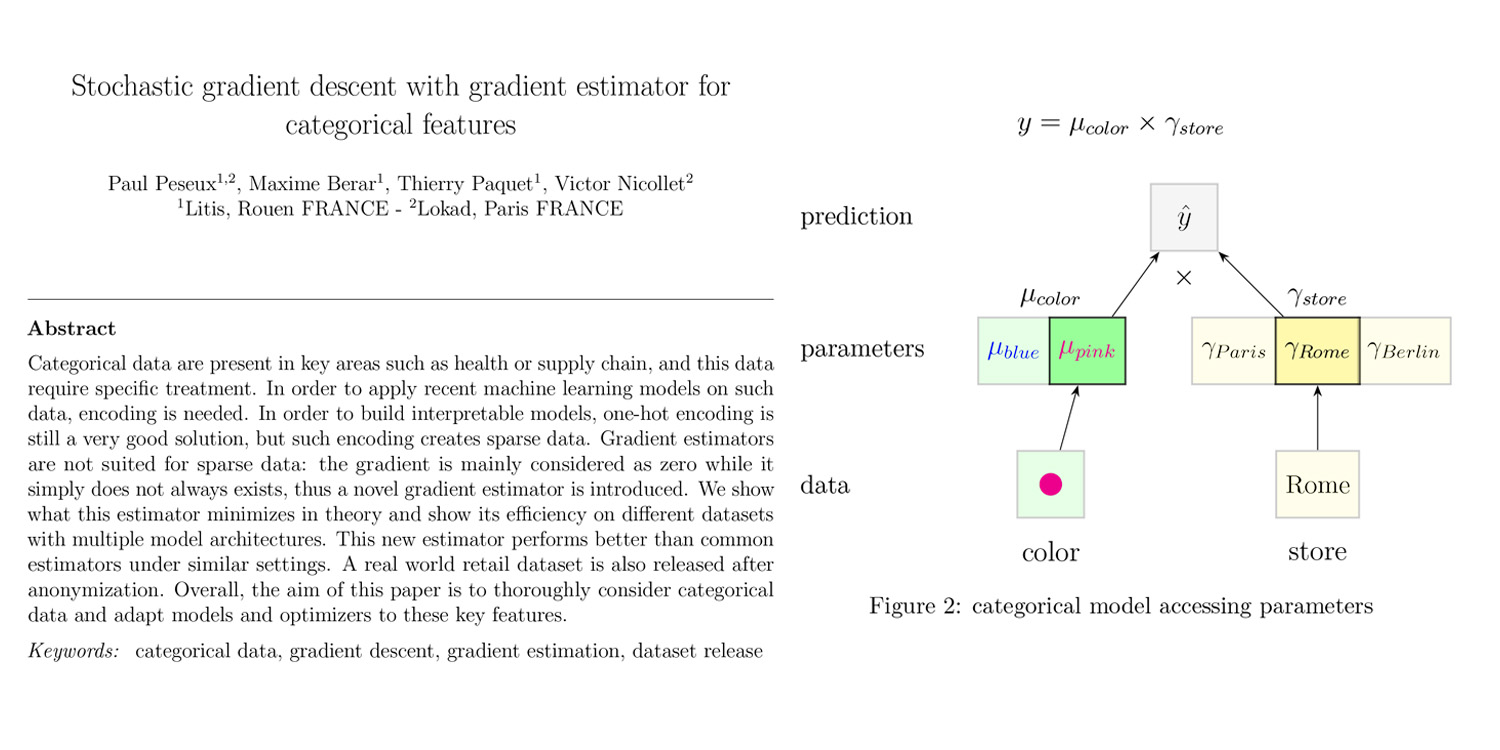

Abstract: Categorical data are present in key areas such as health or supply chain, and this data require specific treatment. In order to apply recent machine learning models on such data, encoding is needed. In order to build interpretable models, one-hot encoding is still a very good solution, but such encoding creates sparse data. Gradient estimators are not suited for sparse data: the gradient is mainly considered as zero while it simply does not always exists, thus a novel gradient estimator is introduced. We show what this estimator minimizes in theory and show its efficiency on different datasets with multiple model architectures. This new estimator performs better than common estimators under similar settings. A real world retail dataset is also released after anonymization. Overall, the aim of this paper is to thoroughly consider categorical data and adapt models and optimizers to these key features.